Hello there Lovelace attendees!

If you're reading this, chances are you've seen my poster at the Lovelace Colloquium in Sheffield. Hi! 👋

This blog is a place where I can keep a rather informal diary of the weeks events, and every little hurdle and victory inbetween!

If you need a refresher of the overview of the project, I wrote a blog post about it waaaay back when I first started in January - Project Overview

So... I thought I'd give you a quick run-through of where my project is so you don't have to delve deeper into the blog posts (unless you want to that is!).

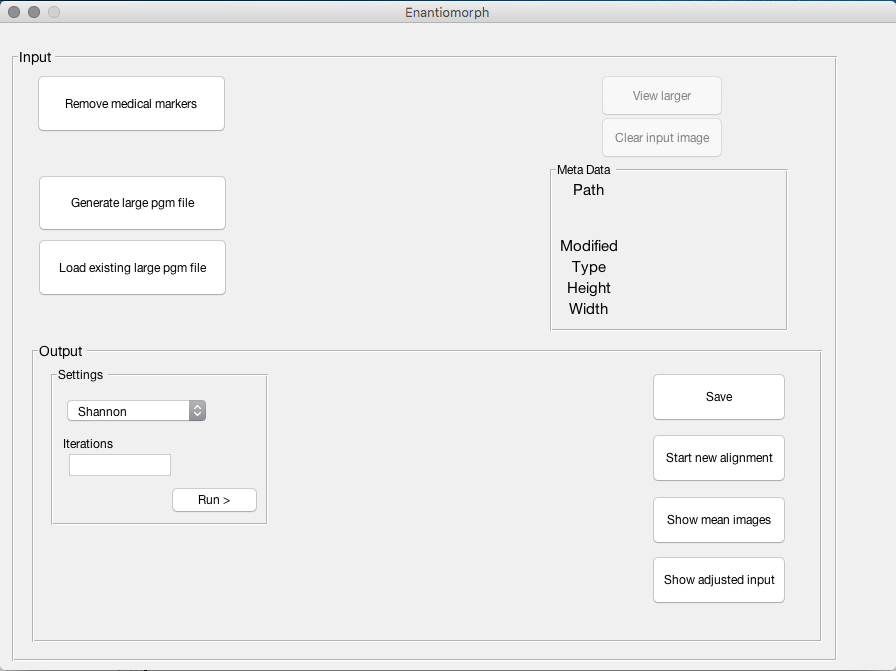

Here's the GUI as it stands now. All the buttons you see are fully functional (bar the 'Start new alignment' - small bug here still).

Why's it called Enantiomorph?

Well, I needed a shorter name for the software, and Enantiomorph's formal definition is: "Mirror image, form related to another as an object is to its image in a mirror."

As this project is all about making the scans look like one another, seemed a fitting name to me!

So let's dive into some of the functionality...

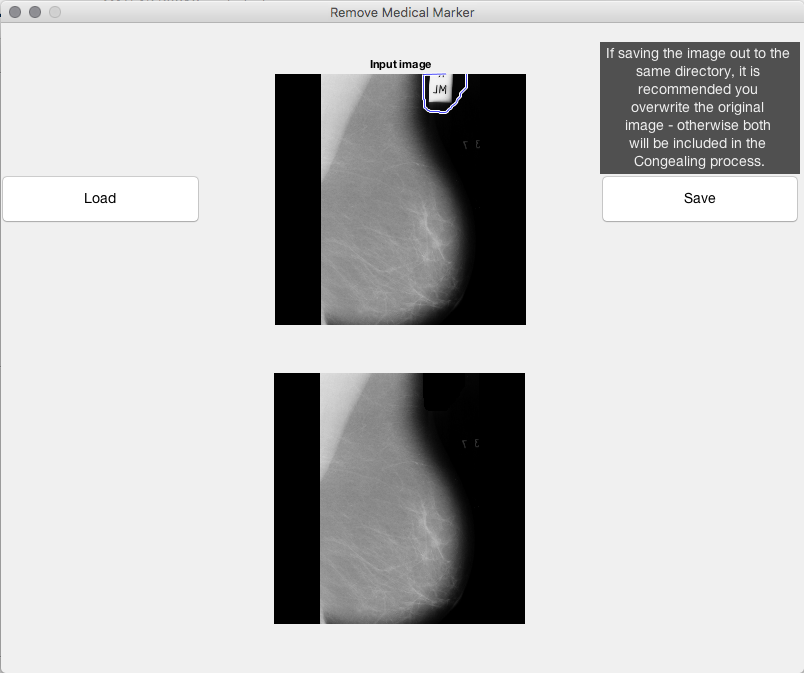

Remove medical markers

As you can see in the top image (and might've experienced if you've had an X-Ray or indeed a mammogram), the scans often contain 'Medical Markers' - little clips which give information. So in this case ML = Mediolateral (taking the scan from the side).

This is an issue when you're trying to average over a set of images, possibly each containing these clips as it will try and 'Congeal' the clips (i.e. align these together). This then detracts from the alignment of the breast tissue.

To combat this, I created a little editing GUI for the user to go in and edit out any medical markings which might impede the alignment.

Generate large pgm / Load

In the future these two buttons may combine to be one - but for the time being I have kept them apart.

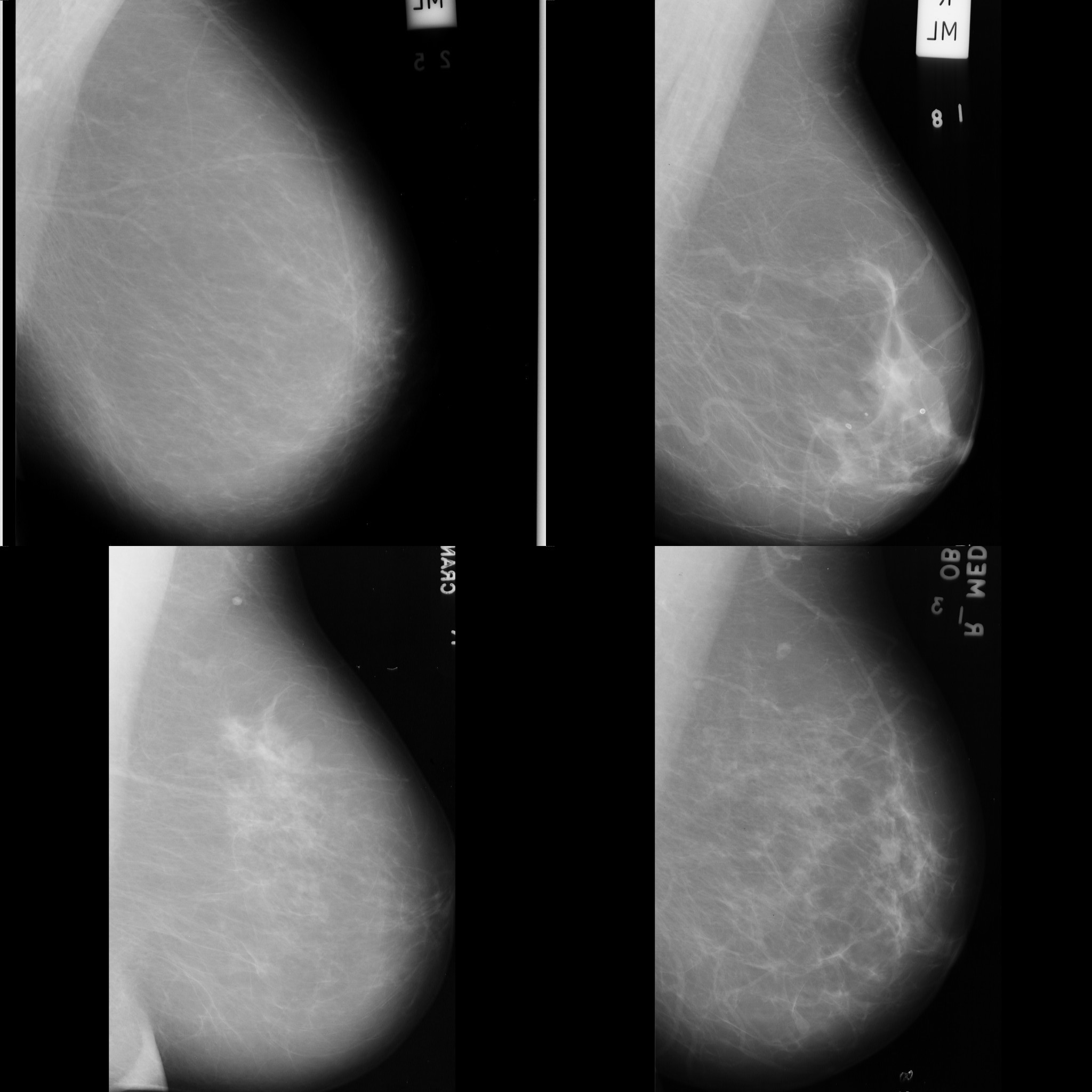

Generate takes a folder of images, creates a large pgm image like below and loads it in to the GUI:

(Note the medical markers! This was before I could remove them)

This is the image that the Congealing process will work upon, taking each scan individually, even though they're all in one file!

The load button simply just loads in one of these large images that has been pre-compiled before.

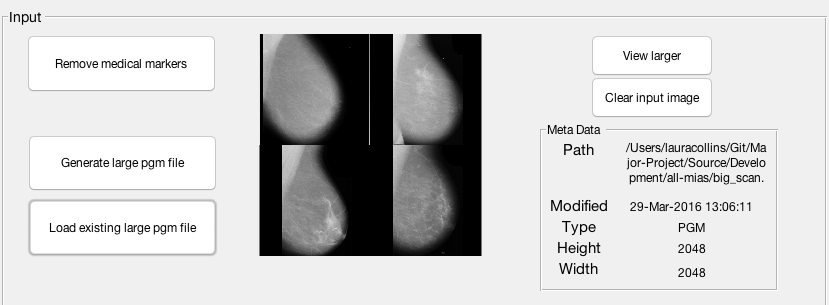

Other input image information

When loaded in, Meta data is displayed about the input image, along with buttons to maximise the image and clear the input, as shown below.

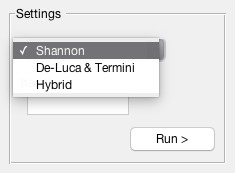

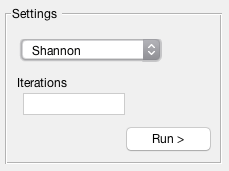

Alignment Metrics & Iterations

Once the image is loaded in, the user can then choose their alignment metric, and the amount of iterations they would like to run.

Iterations is a simple text box with validation to ensure the input is:

a) a number

b) greater than 0

c) less than or equal to 100

When the user hits run, the alignment metric chosen, the iterations and the image loaded in are fed into the Congealing algorithm running in the background.

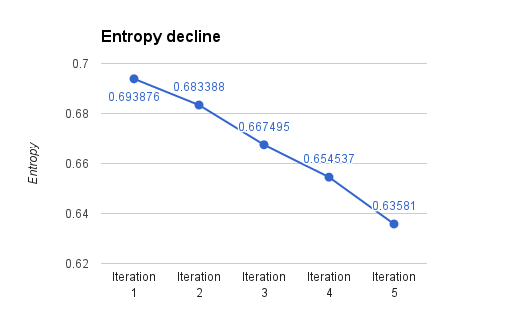

It's important to remember, the idea of this project is to reduce the entropy between the images, to make them more alike.

The alignment metrics

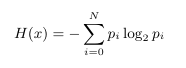

Shannon

Shannon specifies the standard Shannon Entropy is to be used for aligning the scans. Shannon Entropy is the implemented algorithm in the original Congealing demo.

This is a quick metric however lacks the 'fuzziness' / 'possibilistic' edge the other 2 metrics hold.

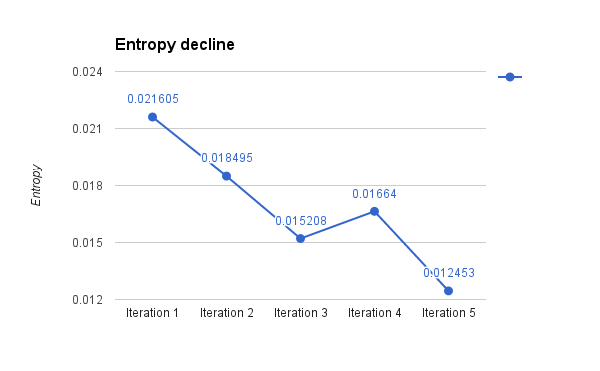

Those familiar with Shannon will no doubt recognise it's equation below:

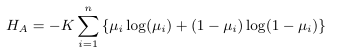

It is important to note, that the entropy value between Shannon entropy, and the fuzzy algorithms is not comparable - due to the lack of possibilistic uncertainty. However for your reference, please see the below graph for Shannon's performance over 5 iterations.

De Luca & Termini

This alignment metric is better known as 'Non-Probabilistic Entropy' and is something I should rename throughout my project now that I know the full name.

This was the first fuzzy entropy algorithm I implemented and De Luca & Termini were actually considered the first to extend Shannon entropy (from before) to allow it this fuzzy measure.

Below is the Non-probabilistic entropy equation:

And here is the entropy decline over 5 iterations:

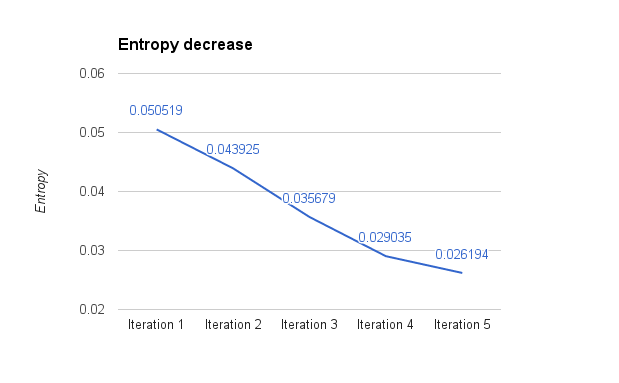

One area where this algorithm falls down - and this may be due to my implementation rather than anything else - is the time in which is takes to process on each iteration, as shown here:

As you can see the red bar (this algorithm) is performing very badly compared to it's counterparts.

Hybrid Entropy

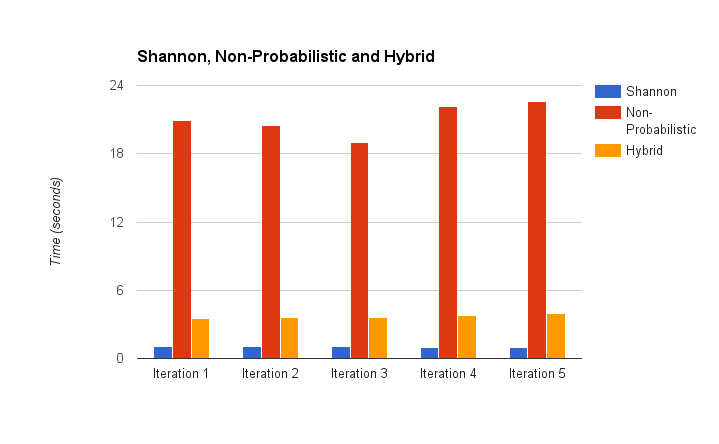

I am currently in the stages of implementing Hybrid entropy - however initial experiments prove it to be quick (as seen above) and good at reducing the entropy across the images:

What you can see at Iteration 3 is a local optima solution - the Congealing algorithm then continued to increase the entropy, to allow it to go one step further down at the fifth Iteration.

I thought I had Hybrid Entropy implemented - as blogged about here - however I think there are still some alterations to be made to the mathematics which I am in the process of right now!

But what about that other alignment metric?

Some of the more eagle-eyed of you may have spotted I mentioned 'Fuzzy Shannon Entropy' on my poster... however after discussing this with my supervisor, we decided that I was not going to implement it.

The reason being that it is a 'characterisation of a fuzzy entropy' and therefore, probably not all that useful to us in this instance, nor all that different from the original Shannon Entropy. De Luca & Termini's Non-probabilistic entropy actually extends this equation into something much better.

Unfortunately I did not have time to edit my poster before today - so apologies everyone!

Final word

Well what I had hoped would be a quick 'whistle stop tour', as they say, turned into more of an essay (sorry!).

If you want to know any more information, please feel free to have a peruse through my blog, and of course, if you have any questions - come back to the poster and say hi!

If I'm not at the stand, my email address is: lauracollins12@googlemail.com

Hope you all have a great day! 😄

Laura